Cameras define the point of view, perspective and aspect from which the scene is rendered.

Cloning

Cloning

Cloners instantiate multiple copies of geometry nodes – such as 3D Objects, Text Nodes and Shape 3D nodes – that are parented to them.

Deformers

Deformers

Deformers modify the vertex positions of geometry nodes (such as 3D Objects, Text Nodes, Shape 3D nodes and particle systems) that the deformer is parented to.

Fields

Fields

Fields are often used for simulations of volumetric and fluid-like effects (e.g. smoke). Fields discretise 2D or 3D areas of space into texels / voxels and store colour and velocity data for them.

Generators

Generators

Generators create patterns mathematically in 2D or 3D.

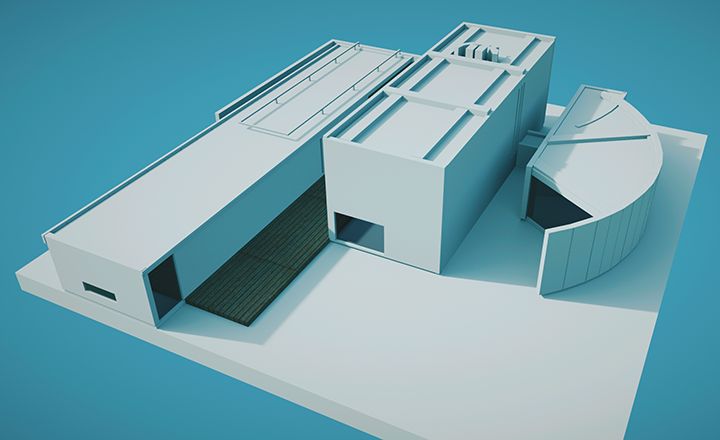

Geometry

Geometry

Geometry nodes are used to generate and/or render meshes, animated geometry and skeletal rigs.

Interactive

Interactive

Interactive nodes allow external inputs to be used in scenes. Sources include keyboard and mouse, clock time, and Internet sources like Twitter and RSS feeds.

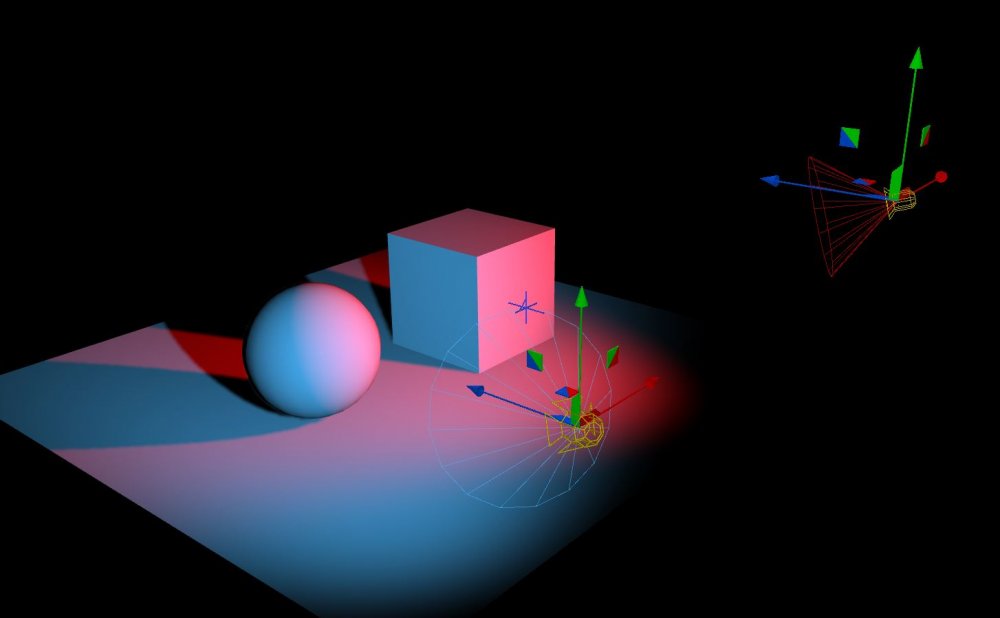

Lighting

Lighting

Lighting nodes control how a scene is lit.

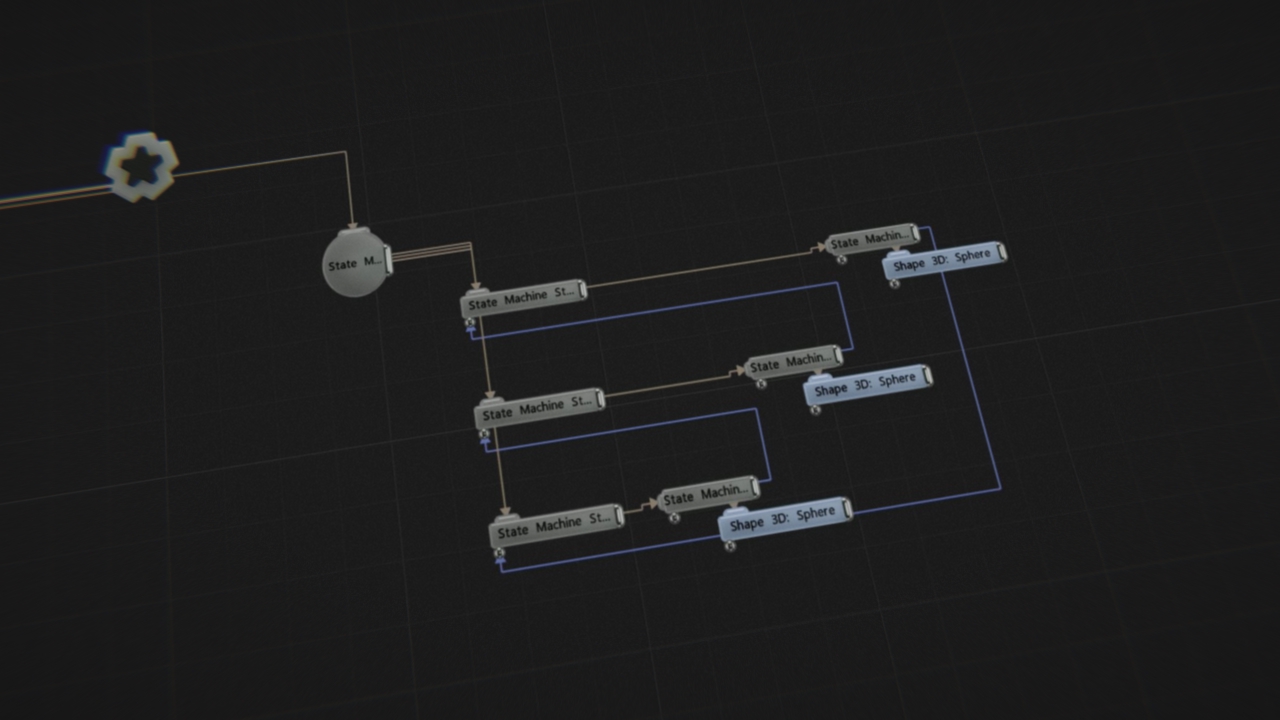

Logic

Logic

The Logic nodes are used to create a meta logic system within Notch.

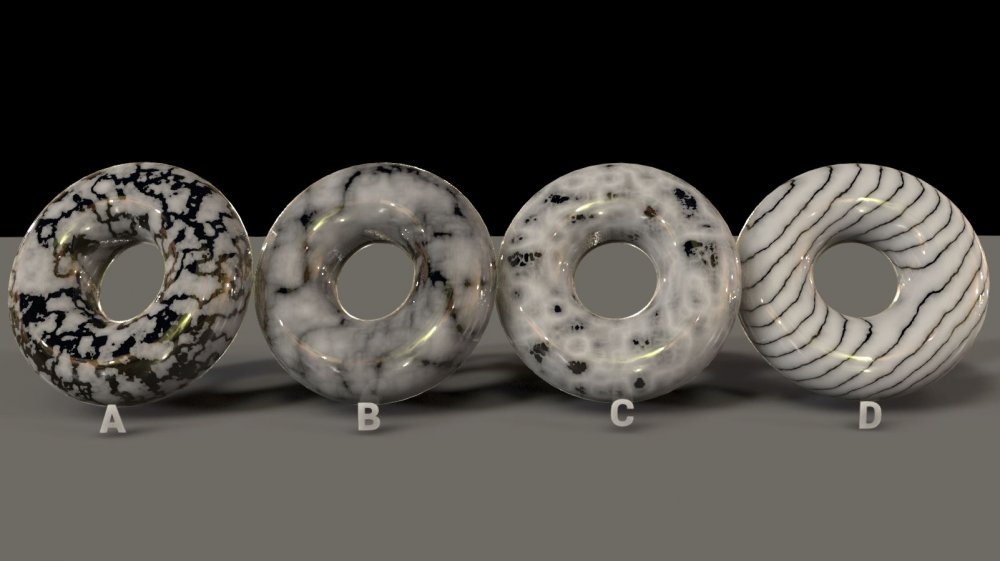

Materials

Materials

Material nodes control how light interacts with the surfaces of objects.

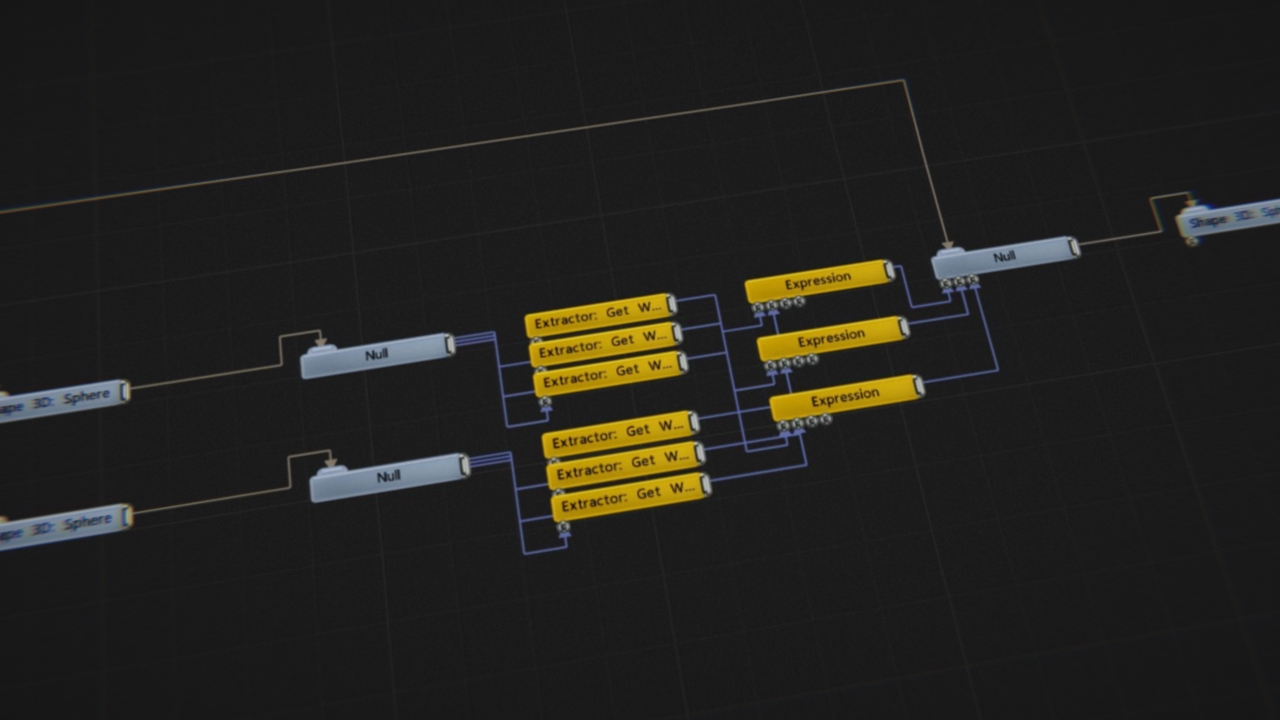

Modifiers

Modifiers

Modifier nodes modify the attributes in other nodes.

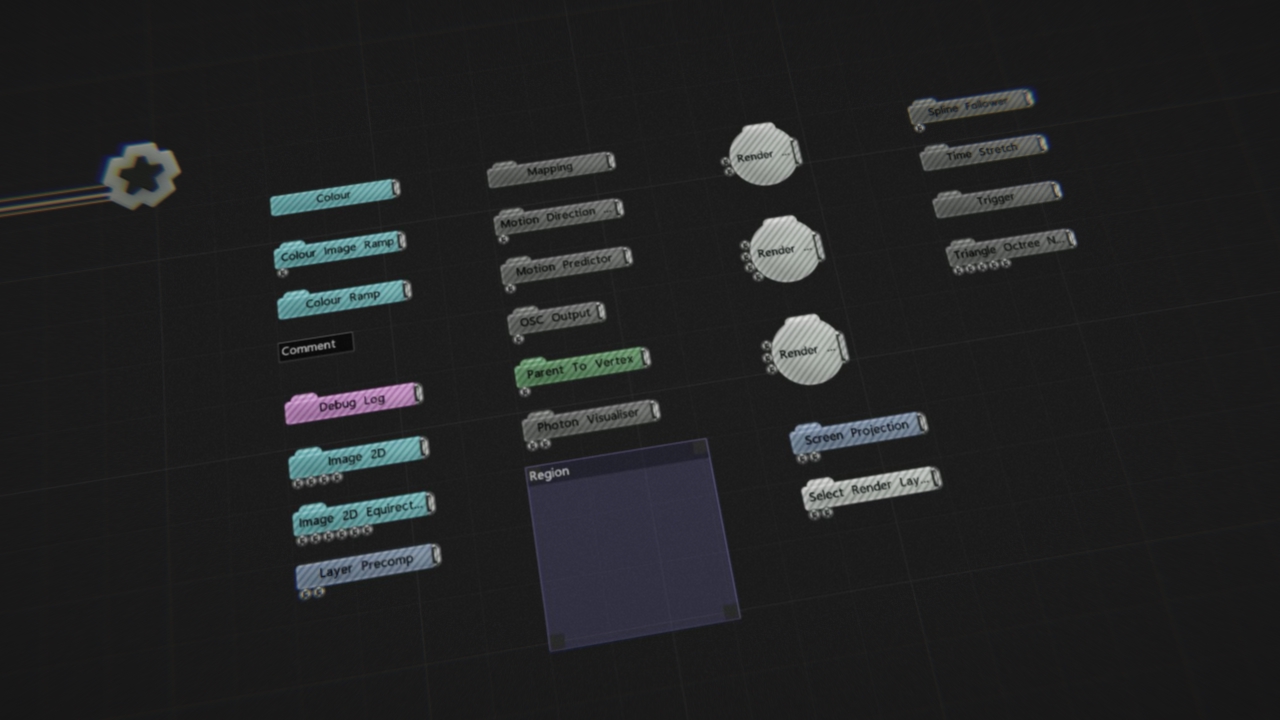

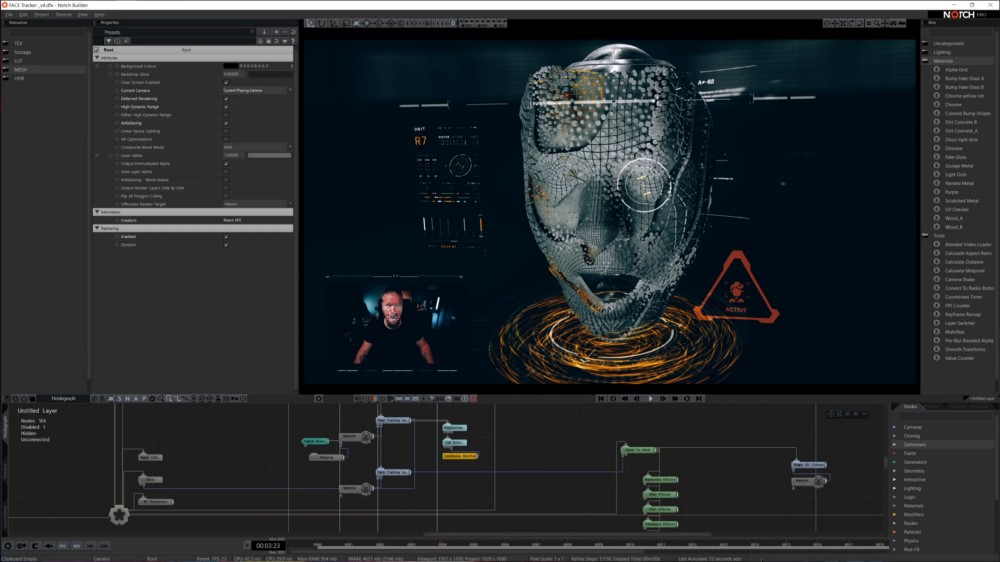

Nodes

Nodes

This section contains a group of mostly miscellaneous nodes.

Particles

Particles

Particle nodes perform simulation of particle-like effects.

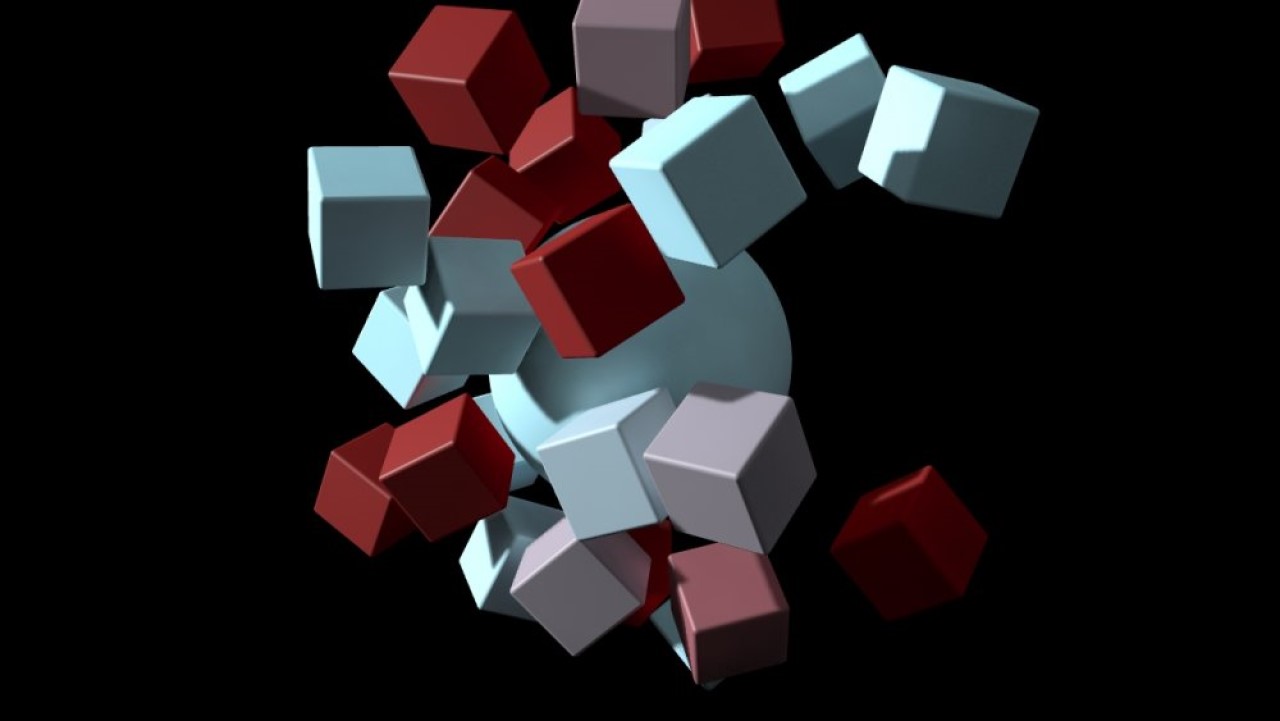

Physics

Physics

Physics nodes allow you to create simple physics systems and dynamic movements for objects in your scene.

Post-FX

Post-FX

Post FX nodes perform image processing on the node they are parented to.

Procedural

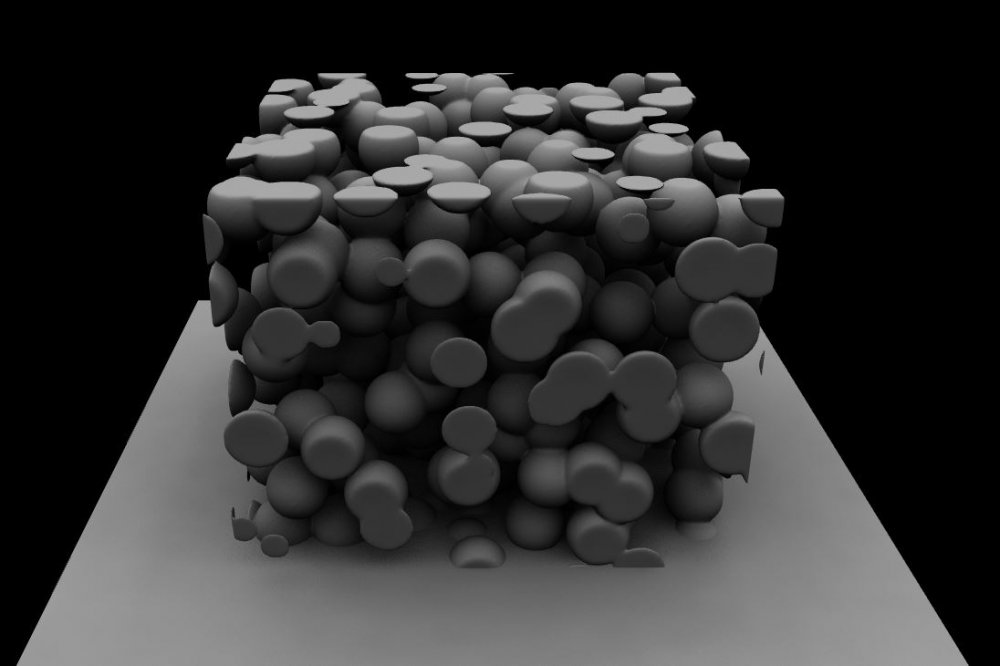

Procedural

Procedural nodes are used to generate geometric and volumetric forms implicitly using signed distance fields.

Ray-Tracing

Ray-Tracing

Ray tracing is a more accurate "photorealistic" rendering technique for calculating lighting, shadows and reflections in a 3D scene.

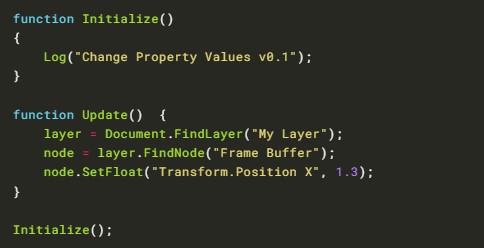

Scripting

Scripting

Scripting nodes allow for project behaviour to be scripted using JavaScript.

Shading Nodes

Shading Nodes

These nodes change the ways that material nodes apply to an objects, and how their materials are shaded on the object surface.

Sound

Sound

These nodes control sound and sound output in Notch.

Text Strings

Text Strings

These nodes handle text strings, either generating them from other data types or modifying them and combining them.

Video Processing

Video Processing

Video Processing nodes perform image processing while also storing a new copy of the image. This allows processing chains with multiple branches to be created.